The Unintended Orchestrator – O’Reilly

That is the primary article in a collection on agentic engineering and AI-driven growth. Search for the following article on March 19 on O’Reilly Radar.

There’s been loads of hype about AI and software program growth, and it is available in two flavors. One says, “We’re all doomed, that instruments like Claude Code will make software program engineering out of date inside a 12 months.” The opposite says, “Don’t fear, the whole lot’s high quality, AI is simply one other instrument within the toolbox.” Neither is trustworthy.

I’ve spent over 20 years writing about software program growth for practitioners, masking the whole lot from coding and structure to challenge administration and workforce dynamics. For the final two years I’ve been centered on AI, coaching builders to make use of these instruments successfully, writing about what works and what doesn’t in books, articles, and reviews. And I stored operating into the identical downside: I had but to seek out anybody with a coherent reply for a way skilled builders ought to truly work with these instruments. There are many suggestions and loads of hype however little or no construction, and little or no you possibly can follow, train, critique, or enhance.

I’d been observing builders at work utilizing AI with varied ranges of success, and I noticed we have to begin fascinated about this as its personal self-discipline. Andrej Karpathy, the previous head of AI at Tesla and a founding member of OpenAI, just lately proposed the time period “agentic engineering” for disciplined growth with AI brokers, and others like Addy Osmani are getting on board. Osmani’s framing is that AI brokers deal with implementation however the human owns the structure, evaluations each diff, and checks relentlessly. I believe that’s proper.

However I’ve spent loads of the final two years instructing builders the right way to use instruments like Claude Code, agent mode in Copilot, Cursor, and others, and what I preserve listening to is that they already know they need to be reviewing the AI’s output, sustaining the structure, writing checks, preserving documentation present, and staying in command of the codebase. They know the right way to do it in idea. However they get caught making an attempt to use it in follow: How do you truly evaluate 1000’s of strains of AI-generated code? How do you retain the structure coherent while you’re working throughout a number of AI instruments over weeks? How have you learnt when the AI is confidently mistaken? And it’s not simply junior builders who’re having hassle with agentic engineering. I’ve talked to senior engineers who battle with the shift to agentic instruments, and intermediate builders who take to it naturally. The distinction isn’t essentially the years of expertise; it’s whether or not they’ve found out an efficient and structured option to work with AI coding instruments. That hole between realizing what builders ought to be doing with agentic engineering and realizing the right way to combine it into their day-to-day work is an actual supply of hysteria for lots of engineers proper now. That’s the hole this collection is making an attempt to fill.

Regardless of what a lot of the hype about agentic engineering is telling you, this sort of growth doesn’t get rid of the necessity for developer experience; simply the alternative. Working successfully with AI brokers truly raises the bar for what builders have to know. I wrote about that have hole in an earlier O’Reilly Radar piece referred to as “The Cognitive Shortcut Paradox.” The builders who get essentially the most from working with AI coding instruments are those who already know what good software program seems like, and might usually inform if the AI wrote it.

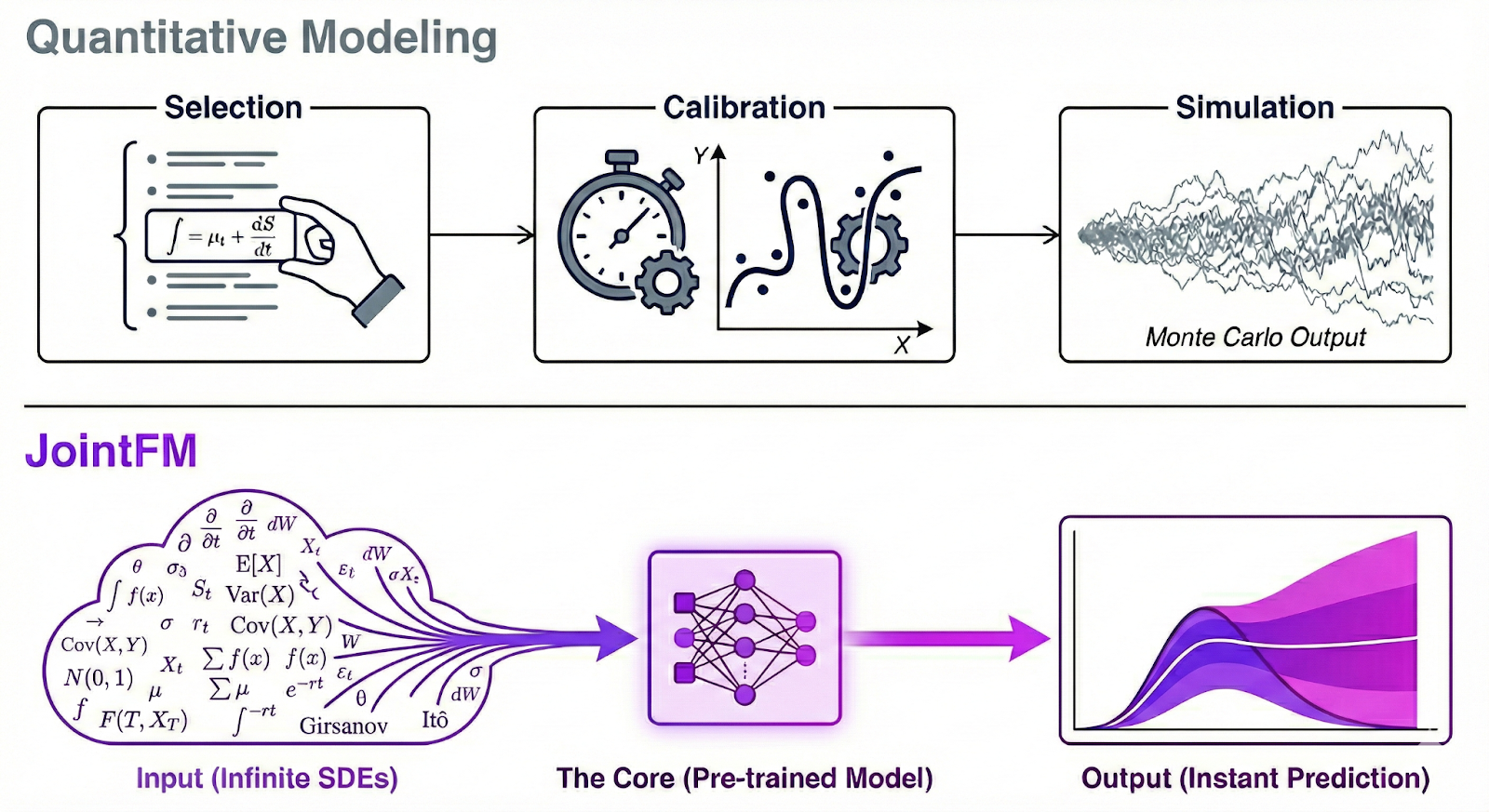

The concept AI instruments work greatest when skilled builders are driving them matched the whole lot I’d noticed. It rang true, and I needed to show it in a manner that different builders would perceive: by constructing software program. So I began constructing a selected, sensible strategy to agentic engineering constructed for builders to comply with, after which I put it to the take a look at. I used it to construct a manufacturing system from scratch, with the rule that AI would write all of the code. I wanted a challenge that was complicated sufficient to stress-test the strategy, and attention-grabbing sufficient to maintain me engaged by means of the onerous components. I needed to use the whole lot I’d discovered and uncover what I nonetheless didn’t know. That’s once I got here again to Monte Carlo simulations.

The experiment

I’ve been obsessive about Monte Carlo simulations ever since I used to be a child. My dad’s an epidemiologist—his entire profession has been about discovering patterns in messy inhabitants information, which implies statistics was at all times a part of our lives (and it additionally implies that I discovered SPSS at a really early age). Once I was possibly 11 he instructed me in regards to the drunken sailor downside: A sailor leaves a bar on a pier, taking a random step towards the water or towards his ship every time. Does he fall in or make it house? You’ll be able to’t know from any single run. However run the simulation a thousand occasions, and the sample emerges from the noise. The person final result is random; the combination is predictable.

I bear in mind writing that simulation in BASIC on my TRS-80 Shade Laptop 2: a little bit blocky sailor stumbling throughout the display, two steps ahead, one step again. The drunken sailor is the “Hiya, world” of Monte Carlo simulations. Monte Carlo is a method for issues you may’t resolve analytically: You simulate them a whole lot or 1000’s of occasions and measure the combination outcomes. Every particular person run is random, however the statistics converge on the true reply because the pattern dimension grows. It’s a method we mannequin the whole lot from nuclear physics to monetary danger to the unfold of illness throughout populations.

What if you happen to may run that type of simulation right now by describing it in plain English? Not a toy demo however 1000’s of iterations with seeded randomness for reproducibility, the place the outputs get validated and the outcomes get aggregated into precise statistics you need to use. Or a pipeline the place an LLM generates content material, a second LLM scores it, and something that doesn’t move will get despatched again for one more attempt.

The objective of my experiment was to construct that system, which I referred to as Octobatch. Proper now, the trade is consistently in search of new real-world end-to-end case research in agentic engineering, and I needed Octobatch to be precisely that case research.

I took the whole lot I’d discovered from instructing and observing builders working with AI, put it to the take a look at by constructing an actual system from scratch, and turned the teachings right into a structured strategy to agentic engineering I’m calling AI-driven growth, or AIDD. That is the primary article in a collection about what agentic engineering seems like in follow, what it calls for from the developer, and how one can apply it to your individual work.

The result’s a totally functioning, well-tested software that consists of about 21,000 strains of Python throughout a number of dozen recordsdata, backed by full specs, practically a thousand automated checks, and high quality integration and regression take a look at suites. I used Claude Cowork to evaluate all of the AI chats from the complete challenge, and it seems that I constructed the complete software in roughly 75 hours of energetic growth time over seven weeks. For comparability, I constructed Octobatch in simply over half the time I spent final 12 months enjoying Blue Prince.

However this collection isn’t nearly Octobatch. I built-in AI instruments at each degree: Claude and Gemini collaborating on structure, Claude Code writing the implementation, LLMs producing the pipelines that run on the system they helped construct. This collection is about what I discovered from that course of: the patterns that labored, the failures that taught me essentially the most, and the orchestration mindset that ties all of it collectively. Every article pulls a special lesson from the experiment, from validation structure to multi-LLM coordination to the values that stored the challenge on observe.

Agentic engineering and AI-driven growth

When most individuals discuss utilizing AI to jot down code, they imply certainly one of two issues: AI coding assistants like GitHub Copilot, Cursor, or Windsurf, which have developed nicely past autocomplete into agentic instruments that may run multifile enhancing periods and outline customized brokers; or “vibe coding,” the place you describe what you need in pure language and settle for no matter comes again. These coding assistants are genuinely spectacular, and vibe coding could be actually productive.

Utilizing these instruments successfully on an actual challenge, nevertheless, sustaining architectural coherence throughout 1000’s of strains of AI-generated code, is a special downside solely. AIDD goals to assist resolve that downside. It’s a structured strategy to agentic engineering the place AI instruments drive substantial parts of the implementation, structure, and even challenge administration, whilst you, the human within the loop, determine what will get constructed and whether or not it’s any good. By “construction,” I imply a set of practices builders can be taught and comply with, a option to know whether or not the AI’s output is definitely good, and a option to keep on observe throughout the lifetime of a challenge. If agentic engineering is the self-discipline, AIDD is one option to follow it.

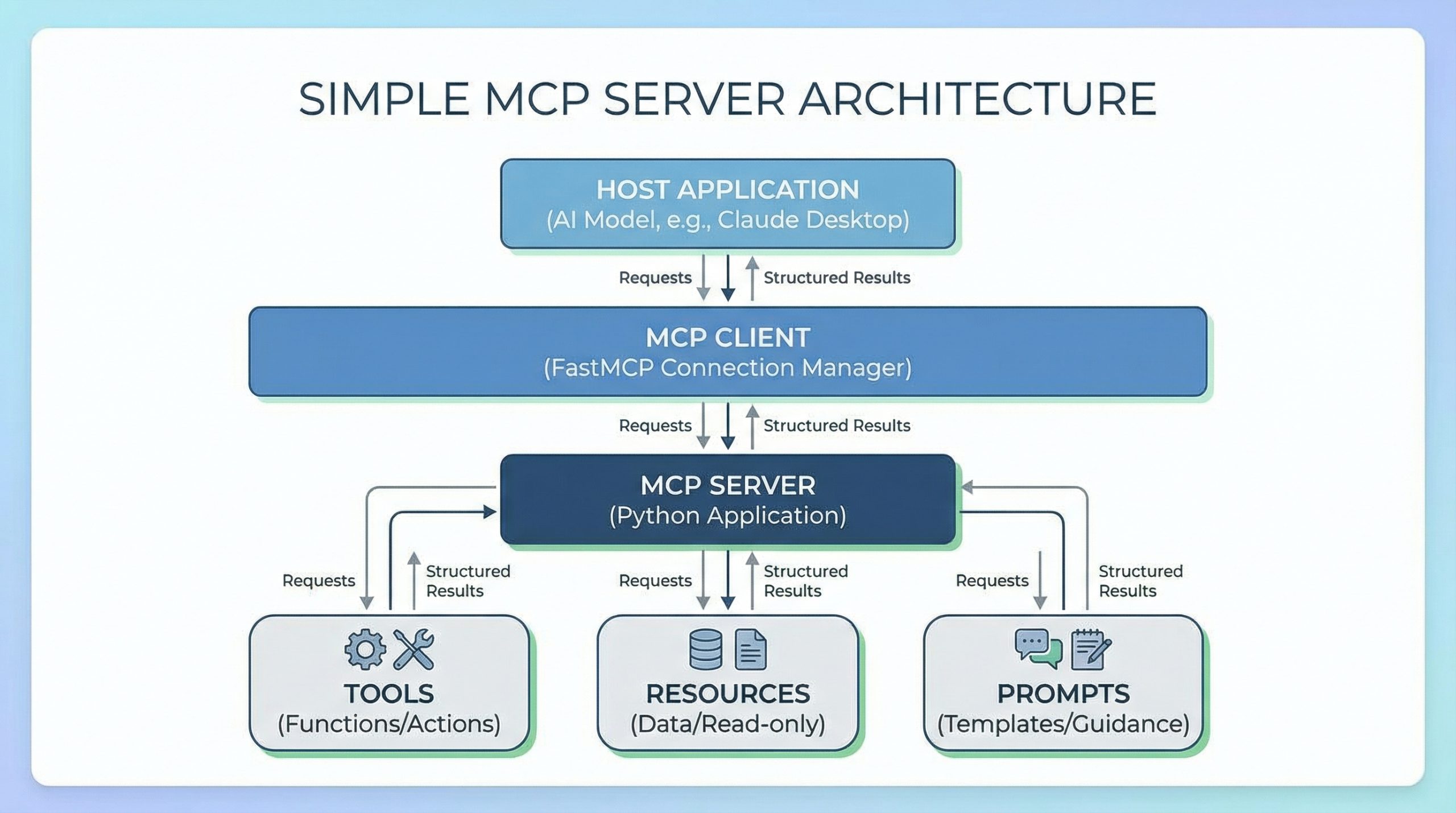

In AI-driven growth, builders don’t simply settle for strategies or hope the output is right. They assign particular roles to particular instruments: one LLM for structure planning, one other for code execution, a coding agent for implementation, and the human for imaginative and prescient, verification, and the selections that require understanding the entire system.

And the “pushed” half is literal. The AI is writing virtually all the code. One in every of my floor guidelines for the Octobatch experiment was that I’d let AI write all of it. I’ve excessive code high quality requirements, and a part of the experiment was seeing whether or not AIDD may produce a system that meets them. The human decides what will get constructed, evaluates whether or not it’s proper, and maintains the constraints that preserve the system coherent.

Not everybody agrees on how a lot the developer wants to remain within the loop, and the absolutely autonomous finish of the spectrum is already producing cautionary tales. Nicholas Carlini at Anthropic just lately tasked 16 Claude cases to construct a C compiler in parallel with no human within the loop. After 2,000 periods and $20,000 in API prices, the brokers produced a 100,000-line compiler that may construct a Linux kernel however isn’t a drop-in alternative for something, and when all 16 brokers acquired caught on the identical bug, Carlini needed to step again in and partition the work himself. Even robust advocates of a very hands-off, vibe-driven strategy to agentic engineering may name {that a} step too far. The query is how a lot human judgment it is advisable to make that code reliable, and what particular practices aid you apply that judgment successfully.

The orchestration mindset

If you wish to get builders fascinated about agentic engineering in the appropriate manner, you need to begin with how they give thought to working with AI, not simply what instruments they use. That’s the place I began once I started constructing a structured strategy, and it’s why I began with habits. I developed a framework for these referred to as the Sens-AI Framework, revealed as each an O’Reilly report (Essential Pondering Habits for Coding with AI) and a Radar collection. It’s constructed round 5 practices: offering context, doing analysis earlier than prompting, framing issues exactly, iterating intentionally on outputs, and making use of important considering to the whole lot the AI produces. I began there as a result of habits are the way you lock in the way in which you consider the way you’re working. With out them, AI-driven growth produces plausible-looking code that falls aside underneath scrutiny. With them, it produces methods {that a} single developer couldn’t construct alone in the identical timeframe.

Habits are the muse, however they’re not the entire image. AIDD additionally has practices (concrete methods like multi-LLM coordination, context file administration, and utilizing one mannequin to validate one other’s output) and values (the rules behind these practices). In the event you’ve labored with Agile methodologies like Scrum or XP, that construction ought to be fairly acquainted: Practices let you know the right way to work day-to-day, and habits are the reflexes you develop in order that the practices grow to be computerized.

Values usually appear weirdly theoretical, however they’re an vital piece of the puzzle as a result of they information your selections when the practices don’t offer you a transparent reply. There’s an rising tradition round agentic engineering proper now, and the values you convey to your challenge both match or conflict with that tradition. Understanding the place the values come from is what makes the practices stick. All of that results in an entire new mindset, what I’m calling the orchestration mindset. This collection builds all 4 layers, utilizing Octobatch because the proving floor.

Octobatch was a deliberate experiment in AIDD. I designed the challenge as a take a look at case for the complete strategy, to see what a disciplined AI-driven workflow may produce and the place it will break down, and I used it to use and enhance the practices and values to make them efficient and simple to undertake. And whether or not by intuition or coincidence, I picked the proper challenge for this experiment. Octobatch is a batch orchestrator. It coordinates asynchronous jobs, manages state throughout failures, tracks dependencies between pipeline steps, and makes certain validated outcomes come out the opposite finish. That type of system is enjoyable to design however loads of the main points, like state machines, retry logic, crash restoration, and price accounting, could be tedious to implement. It’s precisely the type of work the place AIDD ought to shine, as a result of the patterns are nicely understood however the implementation is repetitive and error-prone.

Orchestration—the work of coordinating a number of unbiased processes towards a coherent final result—developed right into a core concept behind AIDD. I discovered myself orchestrating LLMs the identical manner Octobatch orchestrates batch jobs: assigning roles, managing handoffs, validating outputs, recovering from failures. The system I used to be constructing and the method I used to be utilizing to construct it adopted the identical sample. I didn’t anticipate it once I began, however constructing a system that orchestrates AI seems to be a fairly good option to learn to orchestrate AI. That’s the unintended a part of the unintended orchestrator. That parallel runs by means of each article on this collection.

Need Radar delivered straight to your inbox? Be a part of us on Substack. Join right here.

The trail to batch

I didn’t start the Octobatch challenge by beginning with a full end-to-end Monte Carlo simulation. I began the place most individuals begin: typing prompts right into a chat interface. I used to be experimenting with totally different simulation and technology concepts to offer the challenge some construction, and some of them caught. A blackjack technique comparability turned out to be an amazing take a look at case for a multistep Monte Carlo simulation. NPC dialogue technology for a role-playing sport gave me a inventive workload with subjective high quality to measure. Each had the identical form: a set of structured inputs, every processed the identical manner. So I had Claude write a easy script to automate what I’d been doing by hand, and I used Gemini to double-check the work, be certain Claude actually understood my ask, and repair hallucinations. It labored high quality at small scale, however as soon as I began operating greater than 100 or so items, I stored hitting fee limits, the caps that suppliers placed on what number of API requests you can also make per minute.

That’s what pushed me to LLM batch APIs. As a substitute of sending particular person prompts one by one and ready for every response, the main LLM suppliers all provide batch APIs that allow you to submit a file containing your whole requests directly. The supplier processes them on their very own schedule; you look forward to outcomes as a substitute of getting them instantly, however you don’t have to fret about fee caps. I used to be completely happy to find additionally they value 50% much less, and that’s once I began monitoring token utilization and prices in earnest. However the true shock was that batch APIs carried out higher than real-time APIs at scale. As soon as pipelines acquired previous the 100- or 200-unit mark, batch began operating considerably sooner than actual time. The supplier processes the entire batch in parallel on their infrastructure, so that you’re not bottlenecked by round-trip latency or fee caps anymore.

The change to batch APIs modified how I considered the entire downside of coordinating LLM API calls at scale, and led to the thought of configurable pipelines. I may chain phases collectively: The output of 1 step may grow to be the enter to the following, and I may kick off the entire pipeline and are available again to completed outcomes. It seems I wasn’t the one one making the shift to batch APIs. Between April 2024 and July 2025, OpenAI, Anthropic, and Google all launched batch APIs, converging on the identical pricing mannequin: 50% of the real-time fee in trade for asynchronous processing.

You most likely didn’t discover that every one three main AI suppliers launched batch APIs. The trade dialog was dominated by brokers, instrument use, MCP, and real-time reasoning. Batch APIs shipped with comparatively little fanfare, however they characterize a real shift in how we will use LLMs. As a substitute of treating them as conversational companions or one-shot SaaS APIs, we will deal with them as processing infrastructure, nearer to a MapReduce job than a chatbot. You give them structured information and a immediate template, they usually course of all of it and hand again the outcomes. What issues is you could now run tens of 1000’s of those transformations reliably, at scale, with out managing fee limits or connection failures.

Why orchestration?

If batch APIs are so helpful, why can’t you simply write a for-loop that submits requests and collects outcomes? You’ll be able to, and for easy instances a fast script with a for-loop works high quality. However when you begin operating bigger workloads, the issues begin to pile up. Fixing these issues turned out to be probably the most vital classes for growing a structured strategy to agentic engineering.

First, batch jobs are asynchronous. You submit a job, and outcomes come again hours later, so your script wants to trace what was submitted and ballot for completion. In case your script crashes within the center, you lose that state. Second, batch jobs can partially fail. Possibly 97% of your requests succeeded and three% didn’t. Your code wants to determine which 3% failed, extract them, and resubmit simply these objects. Third, if you happen to’re constructing a multistage pipeline the place the output of 1 step feeds into the following, it is advisable to observe dependencies between phases. And fourth, you want value accounting. Whenever you’re operating tens of 1000’s of requests, you wish to know the way a lot you spent, and ideally, how a lot you’re going to spend while you first begin the batch. Each certainly one of these has a direct parallel to what you’re doing in agentic engineering: preserving observe of the work a number of AI brokers are doing directly, coping with code failures and bugs, ensuring the complete challenge stays coherent when AI coding instruments are solely trying on the one half at present in context, and stepping again to take a look at the broader challenge administration image.

All of those issues are solvable, however they’re not issues you wish to resolve time and again (in each conditions—while you’re orchestrating LLM batch jobs or orchestrating AI coding instruments). Fixing these issues within the code gave some attention-grabbing classes in regards to the general strategy to agentic engineering. Batch processing strikes the complexity from connection administration to state administration. Actual-time APIs are onerous due to fee limits and retries. Batch APIs are onerous as a result of you need to observe what’s in flight, what succeeded, what failed, and what’s subsequent.

Earlier than I began growth, I went in search of present instruments that dealt with this mixture of issues, as a result of I didn’t wish to waste my time reinventing the wheel. I didn’t discover something that did the job I wanted. Workflow orchestrators like Apache Airflow and Dagster handle DAGs and job dependencies, however they assume duties are deterministic and don’t present LLM-specific options like immediate template rendering, schema-based output validation, or retry logic triggered by semantic high quality checks. LLM frameworks like LangChain and LlamaIndex are designed round real-time inference chains and agent loops—they don’t handle asynchronous batch job lifecycles, persist state throughout course of crashes, or deal with partial failure restoration on the chunk degree. And the batch API consumer libraries from the suppliers themselves deal with submission and retrieval for a single batch, however not multistage pipelines, cross-step validation, or provider-agnostic execution.

Nothing I discovered lined the total lifecycle of multiphase LLM batch workflows, from submission and polling by means of validation, retry, value monitoring, and crash restoration, throughout all three main AI suppliers. That’s what I constructed.

Classes from the experiment

The objective of this text, as the primary one in my collection on agentic engineering and AI-driven growth, is to put out the speculation and construction of the Octobatch experiment. The remainder of the collection goes deep on the teachings I discovered from it: the validation structure, multi-LLM coordination, the practices and values that emerged from the work, and the orchestration mindset that ties all of it collectively. A number of early classes stand out, as a result of they illustrate what AIDD seems like in follow and why developer expertise issues greater than ever.

- It’s important to run issues and test the info. Keep in mind the drunken sailor, the “Hiya, world” of Monte Carlo simulations? At one level I observed that once I ran the simulation by means of Octobatch, 77.5% of the sailors fell within the water. The outcomes for a random stroll ought to be 50/50, so clearly one thing was badly mistaken. It turned out the random quantity generator was being re-seeded at each iteration with sequential seed values, which created correlation bias between runs. I didn’t determine the issue instantly; I ran a bunch of checks utilizing Claude Code as a take a look at runner to generate every take a look at, run it, and log the outcomes; Gemini regarded on the outcomes and located the foundation trigger. Claude had hassle developing with a repair that labored nicely, and proposed a workaround with a big record of preseeded random quantity values within the pipeline. Gemini proposed a hash-based repair reviewing my conversations with Claude, however it appeared overly complicated. As soon as I understood the issue and rejected their proposed options, I made a decision one of the best repair was easier than both of the AI’s strategies: a persistent RNG per simulation unit that superior naturally by means of its sequence. I wanted to know each the statistics and the code to guage these three choices. Believable-looking output and proper output aren’t the identical factor, and also you want sufficient experience to inform the distinction. (We’ll speak extra about this case within the subsequent article within the collection.)

- LLMs usually overestimate complexity. At one level I needed so as to add help for customized mathematical expressions within the evaluation pipeline. Each Claude and Gemini pushed again, telling me, “That is scope creep for v1.0” and “Reserve it for v1.1.” Claude estimated three hours to implement. As a result of I knew the codebase, I knew we have been already utilizing asteval, a Python library that gives a protected, minimalistic evaluator for mathematical expressions and easy Python statements, elsewhere to guage expressions, so this appeared like a simple use of a library we’re already utilizing elsewhere. Each LLMs thought the answer can be much more complicated and time-consuming than it truly was; it took simply two prompts to Claude Code (generated by Claude), and about 5 minutes complete to implement. The characteristic shipped and made the instrument considerably extra highly effective. The AIs have been being conservative as a result of they didn’t have my context in regards to the system’s structure. Expertise instructed me the mixing can be trivial. With out that have, I’d have listened to them and deferred a characteristic that took 5 minutes.

- AI is commonly biased towards including code, not deleting it. Generative AI is, unsurprisingly, biased towards technology. So once I requested the LLMs to repair issues, their first response was usually so as to add extra code, including one other layer or one other particular case. I can’t consider a single time in the entire challenge when one of many AIs stepped again and mentioned, “Tear this out and rethink the strategy.” The most efficient periods have been those the place I overrode that intuition and pushed for simplicity. That is one thing skilled builders be taught over a profession: Essentially the most profitable modifications usually delete greater than they add—the PRs we brag about are those that delete 1000’s of strains of code.

- The structure emerged from failure. The AI instruments and I didn’t design Octobatch’s core structure up entrance. Our first try was a Python script with in-memory state and loads of hope. It labored for small batches however fell aside at scale: A community hiccup meant restarting from scratch, a malformed response required handbook triage. Numerous issues fell into place after I added the constraint that the system should survive being killed at any second. That single requirement led to the tick mannequin (get up, test state, do work, persist, exit), the manifest file as supply of reality, and the complete crash-recovery structure. We found the design by repeatedly failing to do one thing easier.

- Your growth historical past is a dataset. I simply instructed you many tales from the Octobatch challenge, and this collection will probably be stuffed with them. Each a kind of tales got here from going again by means of the chat logs between me, Claude, and Gemini. With AIDD, you may have a whole transcript of each architectural choice, each mistaken flip, each second the place you overruled the AI and each second the place it corrected you. Only a few growth groups have ever had that degree of constancy of their challenge historical past. Mining these logs for classes discovered seems to be probably the most priceless practices I’ve discovered.

Close to the tip of the challenge, I switched to Cursor to verify none of this was particular to Claude Code. I created recent conversations utilizing the identical context recordsdata I’d been sustaining all through growth, and was capable of bootstrap productive periods instantly; the context recordsdata labored precisely as designed. The practices I’d developed transferred cleanly to a special instrument. The worth of this strategy comes from the habits, the context administration, and the engineering judgment you convey to the dialog, not from any explicit vendor.

These instruments are transferring the world in a route that favors builders who perceive the methods engineering can go mistaken and know stable design and structure patterns…and who’re okay letting go of management of each line of code.

What’s subsequent

Agentic engineering wants construction, and construction wants a concrete instance to make it actual. The following article on this collection goes into Octobatch itself, as a result of the way in which it orchestrates AI is a remarkably shut parallel to what AIDD asks builders to do. Octobatch assigns roles to totally different processing steps, manages handoffs between them, validates their outputs, and recovers once they fail. That’s the identical sample I adopted when constructing it: assigning roles to Claude and Gemini, managing handoffs between them, validating their outputs, and recovering once they went down the mistaken path. Understanding how the system works seems to be a great way to know the right way to orchestrate AI-driven growth. I’ll stroll by means of the structure, present what an actual pipeline seems like from immediate to outcomes, current the info from a 300-hand blackjack Monte Carlo simulation that places all of those concepts to the take a look at, and use all of that to exhibit concepts we will apply on to agentic engineering and AI-driven growth.

Later articles go deeper into the practices and concepts I discovered from this experiment that make AI-driven growth work: how I coordinated a number of AI fashions with out dropping management of the structure, what occurred once I examined the code in opposition to what I truly supposed to construct, and what I discovered in regards to the hole between code that runs and code that does what you meant. Alongside the way in which, the experiment produced some findings about how totally different AI fashions see code that I didn’t count on—and that turned out to matter greater than I assumed they’d.

Supply hyperlink

🔥 Trending Offers You Could Like

Searching for nice offers? Discover our newest discounted merchandise:

One Comment