The Hidden Price of Agentic Failure – O’Reilly

Agentic AI has clearly moved past buzzword standing. McKinsey’s November 2025 survey reveals that 62% of organizations are already experimenting with AI brokers, and the highest performers are pushing them into core workflows within the title of effectivity, progress, and innovation.

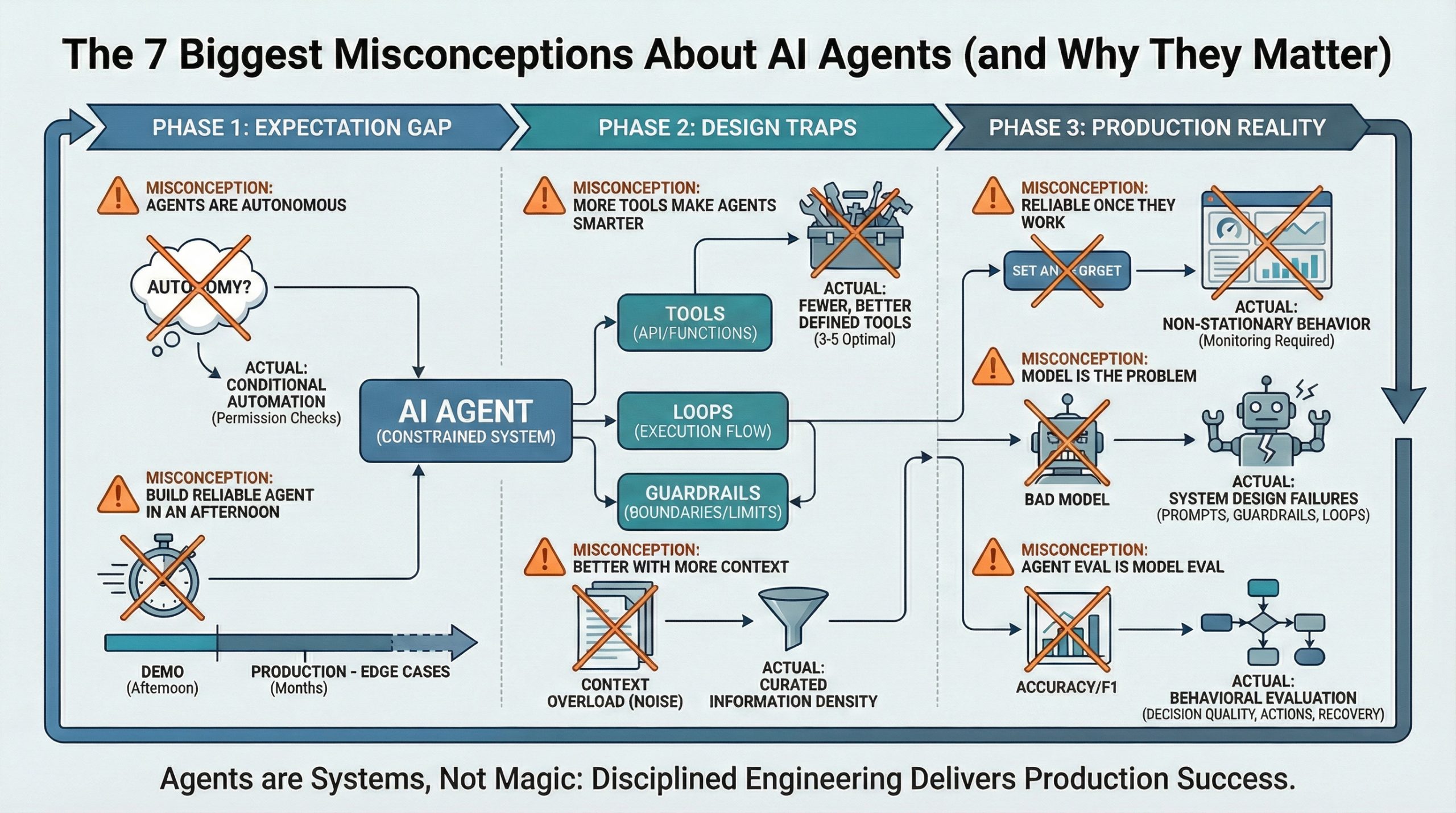

Nonetheless, that is additionally the place issues can get uncomfortable. Everybody within the area is aware of LLMs are probabilistic. All of us monitor leaderboard scores, however then quietly ignore that this uncertainty compounds once we wire a number of fashions collectively. That’s the blind spot. Most multi-agent techniques (MAS) don’t fail as a result of the fashions are unhealthy. They fail as a result of we compose them as if chance doesn’t compound.

The Architectural Debt of Multi-Agent Programs

The arduous fact is that bettering particular person brokers does little or no to enhance general system-level reliability as soon as errors are allowed to propagate unchecked. The core downside of agentic techniques in manufacturing isn’t mannequin high quality alone; it’s composition. As soon as brokers are wired collectively with out validation boundaries, threat compounds.

In apply, this reveals up in looping supervisors, runaway token prices, brittle workflows, and failures that seem intermittently and are almost inconceivable to breed. These techniques usually work simply properly sufficient to cross benchmarks, then fail unpredictably as soon as they’re positioned beneath actual operational load.

If you consider it, each agent handoff introduces an opportunity of failure. Chain sufficient of them collectively, and failure compounds. Even sturdy fashions with a 98% per-agent success price can shortly degrade general system success to 90% or decrease. Every unchecked agent hop multiplies failure chance and, with it, anticipated price. With out express fault tolerance, agentic techniques aren’t simply fragile. They’re economically problematic.

That is the important thing shift in perspective. In manufacturing, MAS shouldn’t be considered collections of clever elements. They behave like probabilistic pipelines, the place each unvalidated handoff multiplies uncertainty and anticipated price.

That is the place many organizations are quietly accumulating what I name architectural debt. In software program engineering, we’re snug speaking about technical debt: improvement shortcuts that make techniques more durable to keep up over time. Agentic techniques introduce a brand new type of debt. Each unvalidated agent boundary provides probabilistic threat that doesn’t present up in unit exams however surfaces later as instability, price overruns, and unpredictable conduct at scale. And in contrast to technical debt, this one doesn’t receives a commission down with refactors or cleaner code. It accumulates silently, till the maths catches up with you.

The Multi-Agent Reliability Tax

When you deal with every agent’s process as an unbiased Bernoulli trial, a easy experiment with a binary final result of success (p) or failure (q), chance turns into a harsh mistress. Look carefully and also you’ll end up on the mercy of the product reliability rule when you begin constructing MAS. In techniques engineering, this impact is formalized by Lusser’s legislation, which states that when unbiased elements are executed in sequence, general system success is the product of their particular person success chances. Whereas it is a simplified mannequin, it captures the compounding impact that’s in any other case straightforward to underestimate in composed MAS.

Take into account a high-performing agent with a single-task accuracy of p = 0.98 (98%). When you apply the product rule for unbiased occasions to a sequential pipeline, you’ll be able to mannequin how your whole system accuracy unfolds. That’s, in case you assume every agent succeeds with chance pi, your failure chance is qi = 1 − pi. Utilized to a multi-agent pipeline, this offers you:

Desk 1 illustrates how your agent system propagates errors by means of your system with out validation.

| # of brokers (n) | Per-agent accuracy (p) | System accuracy (pn) | Error price |

| 1 agent | 98% | 98.0% | 2.0% |

| 3 brokers | 98% | ∼94.1% | ∼5.9% |

| 5 brokers | 98% | ∼90.4% | ∼9.6% |

| 10 brokers | 98% | ∼81.7% | ∼18.3% |

In manufacturing, LLMs aren’t 98% dependable on structured outputs in open-ended duties. As a result of they haven’t any single right output, so correctness should be enforced structurally fairly than assumed. As soon as an agent introduces a fallacious assumption, a malformed schema, or a hallucinated device outcome, each downstream agent situations on that corrupted state. This is the reason it is best to insert validation gates to interrupt the product rule of reliability.

From Stochastic Hope to Deterministic Engineering

When you introduce validation gates, you alter how failure behaves inside your system. As an alternative of permitting one agent’s output to grow to be the unquestioned enter for the subsequent, you pressure each handoff to cross by means of an express boundary. The system not assumes correctness. It verifies it.

In apply, you’d need to have a schema-enforced era through libraries like Pydantic and Teacher. Pydantic is a knowledge validation library for Python, which helps you outline a strict contract for what’s allowed to cross between brokers: Varieties, fields, ranges, and invariants are checked on the boundary, and invalid outputs are rejected or corrected earlier than they will propagate. Teacher strikes that very same contract into the era step itself by forcing the mannequin to retry till it produces a legitimate output or exhausts a bounded retry funds. As soon as validation exists, the reliability math essentially adjustments. Validation catches failures with chance v, now every hop turns into:

Once more, assume you may have a per-agent accuracy of p = 0.98, however now you may have a validation catch price of v = 0.9, then you definitely get:

The +0.02 · 0.9 time period displays recovered failures, since these occasions are disjoint. Desk 2 reveals how this adjustments your techniques conduct.

| # of brokers (n) | Per-agent accuracy (p) | System accuracy (pn) | Error price |

| 1 agent | 99.8% | 99.8% | 0.2% |

| 3 brokers | 99.8% | ∼99.4% | ∼0.6% |

| 5 brokers | 99.8% | ∼99.0% | ∼1.0% |

| 10 brokers | 99.8% | ∼98.0% | ∼2.0% |

Evaluating Desk 1 and Desk 2 makes the impact express: Validation essentially adjustments how failure propagates by means of your MAS. It’s not a naive multiplicative decay, it’s a managed reliability amplification. If you would like a deeper, implementation-level walkthrough of validation patterns for MAS, I cowl it in AI Brokers: The Definitive Information. You may also discover a pocket book within the GitHub repository to run the computation from Desk 1 and Desk 2. Now, you may ask what you are able to do, in case you can’t make your fashions 100% excellent. The excellent news is which you can make the system extra resilient by means of particular architectural shifts.

From Deterministic Engineering to Exploratory Search

Whereas validation retains your system from breaking, it doesn’t essentially assist the system discover the correct reply when the duty is troublesome. For that, you must transfer from filtering to looking. Now you give your agent a approach to generate a number of candidate paths to switch fragile one-shot execution with a managed search over alternate options. That is generally known as test-time compute. As an alternative of committing to the primary sampled output, the system allocates extra inference funds to discover a number of candidates earlier than making a call. Reliability improves not as a result of your mannequin is healthier however as a result of your system delays dedication.

On the easiest degree, this doesn’t require something refined. Even a primary best-of-N technique already improves system stability. As an illustration, in case you pattern a number of unbiased outputs and choose the most effective one, you cut back the prospect of committing to a foul draw. This alone is commonly sufficient to stabilize brittle pipelines that fail beneath single-shot execution.

One efficient method to pick the most effective one out of a number of samples is to make use of frameworks like RULER. RULER (Relative Common LLM-Elicited Rewards) is a general-purpose reward perform which makes use of a configurable LLM-as-judge together with a rating rubric you’ll be able to regulate based mostly in your use case. This works as a result of rating a number of associated candidate options is simpler than scoring each in isolation. By taking a look at a number of options facet by facet, this enables the LLM-as-judge to establish deficiencies and rank them accordingly. Now you get evidence-anchored verification. The decide doesn’t simply agree; it verifies and compares outputs towards one another. This acts as a “circuit breaker” for error propagation, by resetting your failure chance at each agent boundary.

Amortized Intelligence with Reinforcement Studying

As a subsequent doable step you would use group-based reinforcement studying (RL), reminiscent of group relative coverage optimization (GRPO)1 and group sequence coverage optimization (GSPO)2 to show that search right into a realized coverage. GRPO works on the token degree, whereas GSPO works on the sequence degree. You possibly can take your “golden traces” discovered by your search and regulate your base brokers. The golden traces are your profitable reasoning paths. Now you aren’t simply filtering errors anymore; you’re coaching the brokers to keep away from making them within the first place, as a result of your system internalizes these corrections into its personal coverage. The important thing shift is that profitable choice paths are retained and reused fairly than rediscovered repeatedly at inference time.

From Prototypes to Manufacturing

If you would like your agentic techniques to behave reliably in manufacturing, I like to recommend you method agentic failure on this order:

- Introduce strict validation between brokers. Implement schemas and contracts so failures are caught early as a substitute of propagating silently.

- Use easy best-of-N sampling or tree-based search with light-weight judges reminiscent of RULER to attain a number of candidates earlier than committing.

- When you want constant conduct at scale use RL to show your brokers how you can behave extra reliably to your particular use case.

The fact is you gained’t have the ability to absolutely eradicate uncertainty in your MAS, however these strategies offer you actual leverage over how uncertainty behaves. Dependable agentic techniques are construct by design, not by likelihood.

References

- Zhihong Shao et al. “DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Fashions,” 2024, https://arxiv.org/abs/2402.03300.

- Chujie Zheng et al. “Group Sequence Coverage Optimization,” 2025, https://arxiv.org/abs/2507.18071.

Supply hyperlink

🔥 Trending Offers You Might Like

In search of nice offers? Discover our newest discounted merchandise: