80% of Fortune 500 use energetic AI Brokers: Observability, governance, and safety form the brand new frontier

d” “4” “4”=”2″ “” “” “1”]

Right now, Microsoft is releasing the brand new Cyber Pulse report to offer leaders with simple, sensible insights and steering on new cybersecurity dangers. One among right now’s most urgent issues is the governance of AI and autonomous brokers. AI brokers are scaling quicker than some corporations can see them—and that visibility hole is a enterprise threat.1 Like individuals, AI brokers require safety via robust observability, governance, and safety utilizing Zero Belief rules. Because the report highlights, organizations that succeed within the subsequent part of AI adoption will likely be those who transfer with pace and produce enterprise, IT, safety, and developer groups collectively to look at, govern, and safe their AI transformation.

Agent constructing isn’t restricted to technical roles; right now, workers in varied positions create and use brokers in day by day work. Greater than 80% of Fortune 500 corporations right now use AI energetic brokers constructed with low-code/no-code instruments.2 AI is ubiquitous in lots of operations, and generative AI-powered brokers are embedded in workflows throughout gross sales, finance, safety, customer support, and product innovation.

With agent use increasing and transformation alternatives multiplying, now’s the time to get foundational controls in place. AI brokers ought to be held to the identical requirements as workers or service accounts. Which means making use of lengthy‑standing Zero Belief safety rules constantly:

[=”” products=”v1|366166189961|0″ visible=”description” title_tag=”div” img_ratio=”4×3″ =”2,1″]- Least privilege entry: Give each person, AI agent, or system solely what they want—no extra.

- Express verification: At all times affirm who or what’s requesting entry utilizing identification, gadget well being, location, threat degree.

- Assume compromise can happen: Design methods anticipating that cyberattackers will get inside.

These rules are usually not new, and plenty of safety groups have carried out Zero Belief rules of their group. What’s new is their software to non‑human customers working at scale and pace. Organizations that embed these controls inside their deployment of AI brokers from the start will be capable to transfer quicker, constructing belief in AI.

The rise of human-led AI brokers

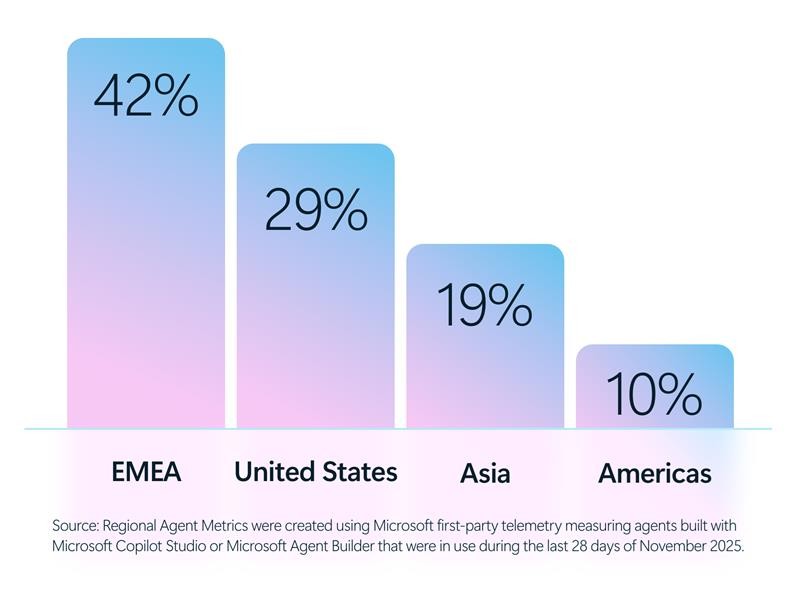

The expansion of AI brokers expands throughout many areas around the globe from the Americas to Europe, Center East, and Africa (EMEA), and Asia.

[=”” products=”v1|357958017114|0″ visible=”description” title_tag=”div” img_ratio=”4×3″]

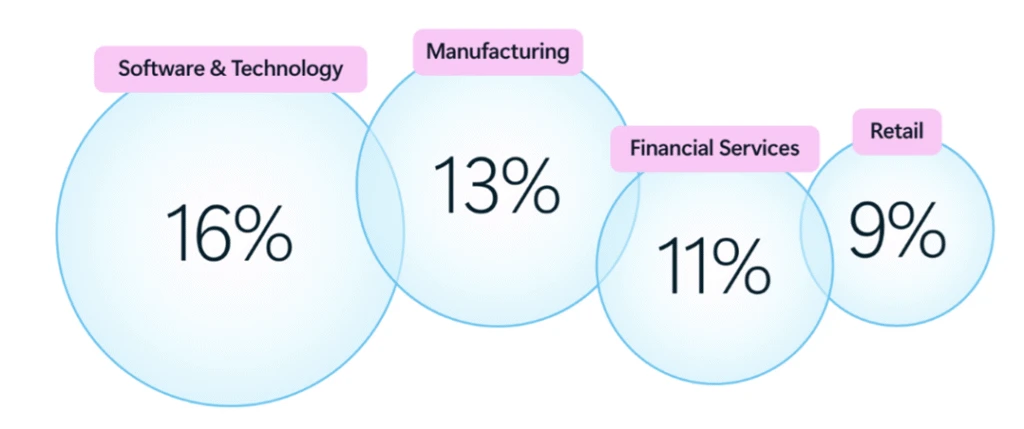

In line with Cyber Pulse, main industries akin to software program and expertise (16%), manufacturing (13%), monetary establishments (11%), and retail (9%) are utilizing brokers to help more and more complicated duties—drafting proposals, analyzing monetary knowledge, triaging safety alerts, automating repetitive processes, and surfacing insights at machine pace.3 These brokers can function in assistive modes, responding to person prompts, or autonomously, executing duties with minimal human intervention.

And in contrast to conventional software program, brokers are dynamic. They act. They resolve. They entry knowledge. And more and more, they work together with different brokers.

That modifications the danger profile essentially.

The blind spot: Agent progress with out observability, governance, and safety

Regardless of the fast adoption of AI brokers, many organizations wrestle to reply some primary questions:

[=”” products=”v1|397508741254|0″ visible=”description” title_tag=”div” img_ratio=”4×3″ =”2,1″]- What number of brokers are operating throughout the enterprise?

- Who owns them?

- What knowledge do they contact?

- Which brokers are sanctioned—and which aren’t?

This isn’t a hypothetical concern. Shadow IT has existed for many years, however shadow AI introduces new dimensions of threat. Brokers can inherit permissions, entry delicate data, and generate outputs at scale—typically outdoors the visibility of IT and safety groups. Dangerous actors would possibly exploit brokers’ entry and privileges, turning them into unintended double brokers. Like human workers, an agent with an excessive amount of entry—or the unsuitable directions—can turn out to be a vulnerability. When leaders lack observability of their AI ecosystem, threat accumulates silently.

In line with the Cyber Pulse report, already 29% of workers have turned to unsanctioned AI brokers for work duties.4 This disparity is noteworthy, because it signifies that quite a few organizations are deploying AI capabilities and brokers previous to establishing applicable controls for entry administration, knowledge safety, compliance, and accountability. In regulated sectors akin to monetary providers, healthcare, and the general public sector, this hole can have significantly vital penalties.

Why observability comes first

You’ll be able to’t shield what you can’t see, and you may’t handle what you don’t perceive. Observability is having a management airplane throughout all layers of the group (IT, safety, builders, and AI groups) to know:

- What brokers exist

- Who owns them

- What methods and knowledge they contact

- How they behave

Within the Cyber Pulse report, we define 5 core capabilities that organizations want to determine for true observability and governance of AI brokers:

- Registry: A centralized registry acts as a single supply of fact for all brokers throughout the group—sanctioned, third‑occasion, and rising shadow brokers. This stock helps forestall agent sprawl, permits accountability, and helps discovery whereas permitting unsanctioned brokers to be restricted or quarantined when vital.

- Entry management: Every agent is ruled utilizing the identical identification‑ and coverage‑pushed entry controls utilized to human customers and functions. Least‑privilege permissions, enforced constantly, assist guarantee brokers can entry solely the info, methods, and workflows required to satisfy their objective—no extra, no much less.

- Visualization: Actual‑time dashboards and telemetry present perception into how brokers work together with individuals, knowledge, and methods. Leaders can see the place brokers are working, understanding dependencies, and monitoring habits and impression—supporting quicker detection of misuse, drift, or rising threat.

- Interoperability: Brokers function throughout Microsoft platforms, open‑supply frameworks, and third‑occasion ecosystems below a constant governance mannequin. This interoperability permits brokers to collaborate with individuals and different brokers throughout workflows whereas remaining managed inside the similar enterprise controls.

- Safety: Constructed‑in protections safeguard brokers from inside misuse and exterior cyberthreats. Safety indicators, coverage enforcement, and built-in tooling assist organizations detect compromised or misaligned brokers early and reply shortly—earlier than points escalate into enterprise, regulatory, or reputational hurt.

Governance and safety are usually not the identical—and each matter

One essential clarification rising from Cyber Pulse is that this: governance and safety are associated, however not interchangeable.

- Governance defines possession, accountability, coverage, and oversight.

- Safety enforces controls, protects entry, and detects cyberthreats.

Each are required. And neither can reach isolation.

AI governance can not dwell solely inside IT, and AI safety can’t be delegated solely to chief data safety officers (CISOs). It is a cross purposeful duty, spanning authorized, compliance, human assets, knowledge science, enterprise management, and the board.

[=”” products=”v1|366045654955|0″ visible=”description” title_tag=”div” img_ratio=”4×3″ =”2,1″]When AI threat is handled as a core enterprise threat—alongside monetary, operational, and regulatory threat—organizations are higher positioned to maneuver shortly and safely.

Robust safety and governance do greater than cut back threat—they permit transparency. And transparency is quick changing into a aggressive benefit.

From threat administration to aggressive benefit

That is an thrilling time for main Frontier Corporations. Many organizations are already utilizing this second to modernize governance, cut back overshared knowledge, and set up safety controls that enable secure use. They’re proving that safety and innovation are usually not opposing forces; they’re reinforcing ones. Safety is a catalyst for innovation.

In line with the Cyber Pulse report, the leaders who act now will mitigate threat, unlock quicker innovation, shield buyer belief, and construct resilience into the very cloth of their AI-powered enterprises. The long run belongs to organizations that innovate at machine pace and observe, govern and safe with the identical precision. If we get this proper, and I do know we are going to, AI turns into greater than a breakthrough in expertise—it turns into a breakthrough in human ambition.

To be taught extra about Microsoft Safety options, go to our web site. Bookmark the Safety weblog to maintain up with our knowledgeable protection on safety issues. Additionally, comply with us on LinkedIn (Microsoft Safety) and X (@MSFTSecurity) for the newest information and updates on cybersecurity.

1Microsoft Information Safety Index 2026: Unifying Information Safety and AI Innovation, Microsoft Safety, 2026.

2Based mostly on Microsoft first‑occasion telemetry measuring brokers constructed with Microsoft Copilot Studio or Microsoft Agent Builder that have been in use over the last 28 days of November 2025.

3Trade and Regional Agent Metrics have been created utilizing Microsoft first‑occasion telemetry measuring brokers constructed with Microsoft Copilot Studio or Microsoft Agent Builder that have been in use over the last 28 days of November 2025.

[=”” products=”v1|306299281212|605972797737″ visible=”description” title_tag=”div” img_ratio=”4×3″]4July 2025 multi-national survey of greater than 1,700 knowledge safety professionals commissioned by Microsoft from Speculation Group.

Methodology:

Trade and Regional Agent Metrics have been created utilizing Microsoft first‑occasion telemetry measuring brokers constructed with Microsoft Copilot Studio or Microsoft Agent Builder that have been in use throughout the previous 28 days of November 2025.

2026 Information Safety Index:

A 25-minute multinational on-line survey was performed from July 16 to August 11, 2025, amongst 1,725 knowledge safety leaders.

Questions centered across the knowledge safety panorama, knowledge safety incidents, securing worker use of generative AI, and using generative AI in knowledge safety packages to spotlight comparisons to 2024.

One-hour in-depth interviews have been performed with 10 knowledge safety leaders in america and United Kingdom to garner tales about how they’re approaching knowledge safety of their organizations.

Definitions:

Lively Brokers are 1) deployed to manufacturing and a pair of) have some “actual exercise” related to them within the previous 28 days.

“Actual exercise” is outlined as 1+ engagement with a person (assistive brokers) OR 1+ autonomous runs (autonomous brokers).

function facebookTracking() {

// If GPC or AMC Signal is enabled, do not fire Facebook Pixel

if ( navigator.globalPrivacyControl || document.cookie.includes(‘3PAdsOptOut=1′) ) {

return false;

}

!function(f,b,e,v,n,t,s){if(f.fbq)return;n=f.fbq=function(){n.callMethod?

n.callMethod.apply(n,arguments):n.queue.push(arguments)};if(!f._fbq)f._fbq=n;

n.push=n;n.loaded=!0;n.version=’2.0’;n.queue=[];t=b.createElement(e);t.async=!0;

t.src=v;t.type=”ms-delay-type”;t.setAttribute(‘data-ms-type’,’text/javascript’);

s=b.getElementsByTagName(e)[0];s.parentNode.insertBefore(t,s)}(window,

document,’script’,’

fbq(‘init’, ‘1770559986549030’);

fbq(‘track’, ‘PageView’);

}

Supply hyperlink