Agent Evaluation: The fitting strategy to Examine and Measure Agentic AI Effectivity

d” “4” “4”=”2″ “” “”]

d” “4” “4”=”2″ “” “” “1”]

On this text, you may examine a wise, production-focused framework for testing and measuring the real-world effectivity of agentic AI strategies.

Issues we’re going to cowl embrace:

- Why evaluating brokers differs principally from evaluating standalone language fashions.

- The 4 pillars of agent evaluation and basically essentially the most useful metrics for each.

- The fitting strategy to assemble an computerized, human, or hybrid evaluation pipeline that catches failures early.

Let’s get correct to it.

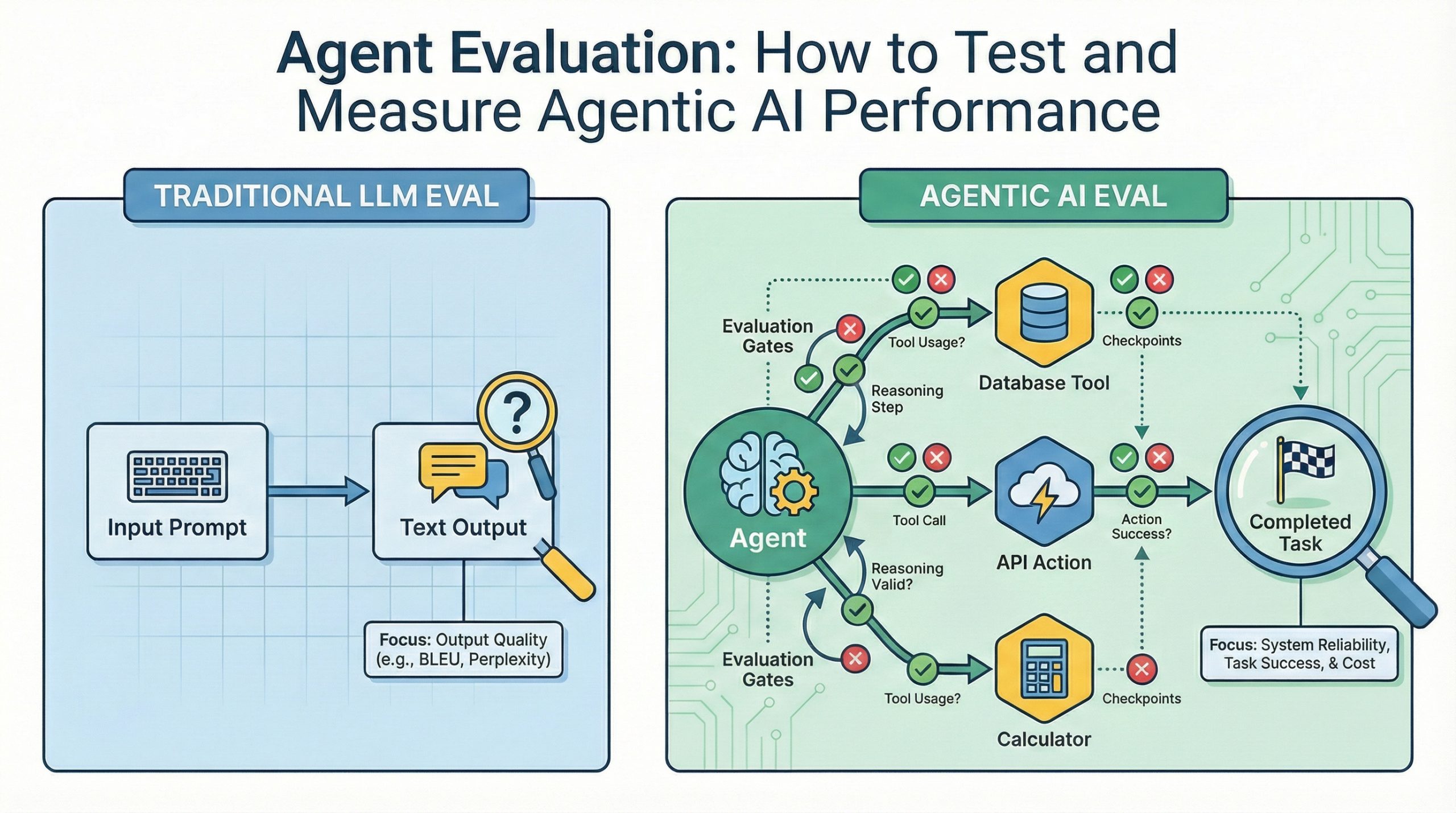

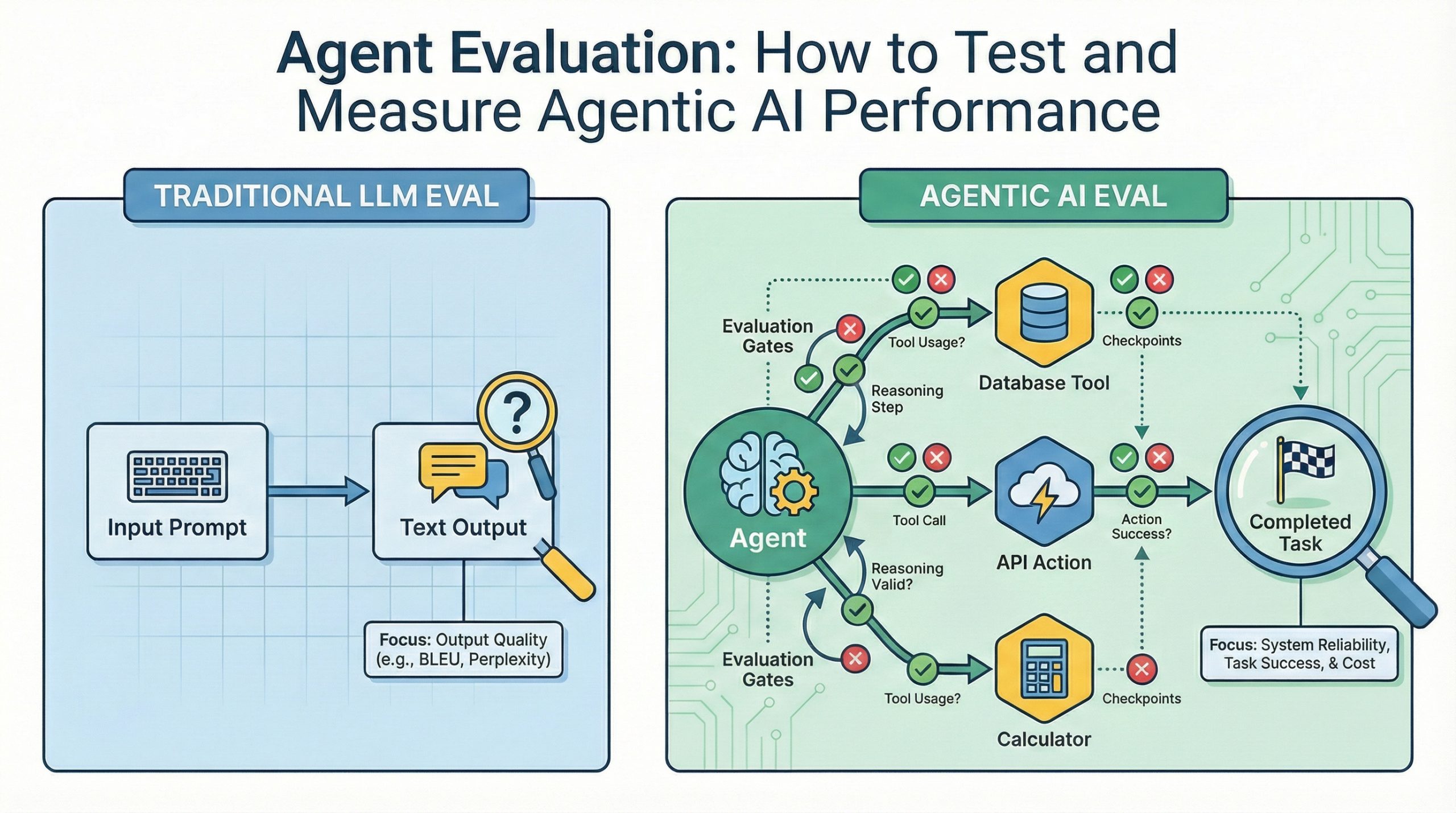

Agent Evaluation: The fitting strategy to Examine and Measure Agentic AI Effectivity (click on on to enlarge)

Image by Creator

Introduction

AI brokers that use devices, make alternatives, and full multi-step duties aren’t prototypes anymore. The issue is figuring out whether or not or not your agent actually works reliably in manufacturing. Typical language model evaluation metrics like BLEU scores or perplexity miss what points for brokers. Did it accomplish the obligation precisely? Did it use devices appropriately? Could it recuperate from failures?

This data covers a wise framework for evaluating agent effectivity all through 4 dimensions that resolve manufacturing readiness. You’ll see what to measure, which evaluation methods match utterly completely different use cases, and assemble an evaluation pipeline that catches points sooner than they hit clients.

Why Agent Evaluation Differs from LLM Evaluation

Evaluating standalone language fashions focuses on textual content material prime quality metrics like coherence, factual accuracy, and response relevance. These metrics assume the model’s job ends when it generates textual content material. Agent evaluation requires a particular methodology on account of brokers don’t merely generate textual content material. They take actions, invoke devices with specific parameters, make sequential alternatives that assemble on earlier steps, and may recuperate when exterior APIs fail or return shocking information.

A purchaser assist agent should lookup order standing, course of refunds, and exchange purchaser data. Typical LLM metrics inform you nothing about whether or not or not it known as the acceptable API endpoints, handed acceptable purchaser IDs, or handled cases the place refund requests exceeded protection limits. Agent evaluation ought to assess exercise completion success, system invocation accuracy, reasoning prime quality all through multi-step workflows, and failure coping with.

[=”” products=”v1|389461097816|0″ visible=”description” title_tag=”div” img_ratio=”4×3″] [=”” products=”MRNNETWORK” visible=”description” title_tag=”div” img_ratio=”4×3″]Evaluating language fashions versus evaluating brokers is like testing a calculator’s present versus testing an entire financial system. One focuses on output prime quality, the alternative on whether or not or not the system accomplishes its supposed purpose reliably under precise circumstances.

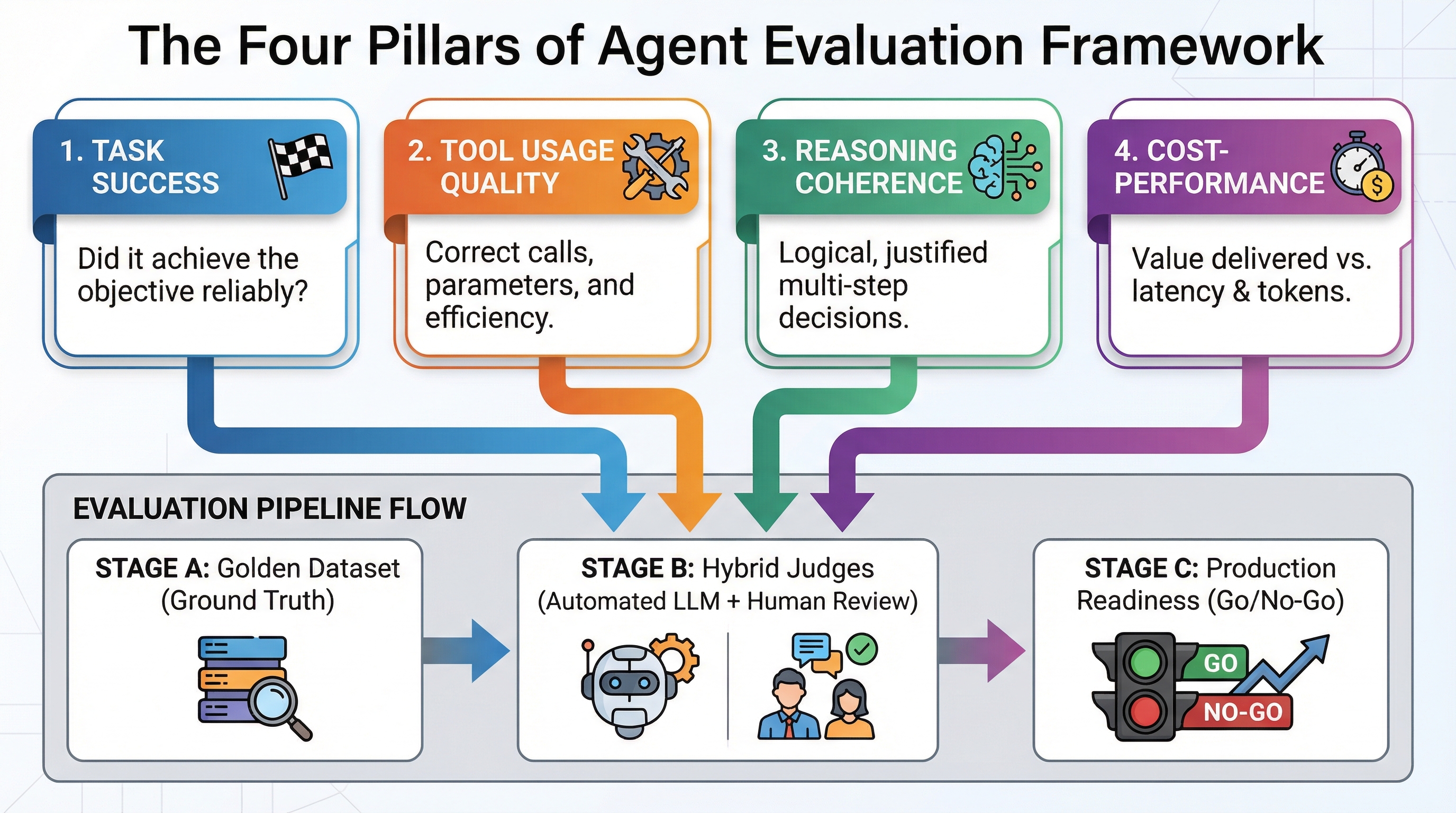

The 4 Pillars of Agent Evaluation

Agent evaluation frameworks measure 4 interconnected dimensions. Each addresses a particular failure mode that will break agent reliability.

Exercise Success measures whether or not or not the agent accomplished its assigned purpose. This requires precise definition. For a purchaser assist agent, “resolved the shopper inquiry” might indicate the shopper’s question was answered (outcome-based), that all required workflow steps have been completed (process-based), or that the shopper expressed satisfaction (quality-based). Fully completely different definitions lead to utterly completely different evaluation approaches and optimization strategies.

[=”” products=”v1|389105807093|0″ visible=”description” title_tag=”div” img_ratio=”4×3″ =”2,1″] [=”” products=”v1|389105807093|0″ visible=”description” title_tag=”div” img_ratio=”4×3″ =”2,1″]System Utilization Prime quality examines whether or not or not the agent invoked the acceptable devices with acceptable arguments at relevant cases. Poor system utilization appears in quite a lot of varieties: calling devices that don’t help get hold of the goal (relevance failures), passing malformed parameters that set off API errors (accuracy failures), making redundant calls that waste tokens and latency (effectivity failures), or skipping wanted system calls (completeness failures). Reliable system calling patterns distinguish manufacturing brokers from prototype demonstrations.

Reasoning Coherence assesses whether or not or not the agent’s decision-making course of was logical and justified given obtainable data. Manufacturing strategies need interpretability and debugging performance. An agent that arrives on the correct decision by illogical reasoning will fail unpredictably as circumstances change. Reasoning evaluation examines whether or not or not intermediate steps adjust to from earlier observations, whether or not or not the agent considered associated alternate choices, and whether or not or not it updated beliefs appropriately when new data contradicted assumptions.

Worth-Effectivity Commerce-offs quantify what each worthwhile exercise costs in tokens, API calls, latency, and infrastructure sources relative to the value delivered. An agent that achieves 95% exercise success nonetheless requires 50 API calls and 30 seconds per exercise is also technically acceptable nonetheless economically unviable. Evaluation ought to steadiness accuracy in opposition to operational costs to go looking out architectures that ship acceptable effectivity at sustainable expense.

This evaluation rubric provides concrete measurement dimensions:

| Pillar | What You’re Measuring | The fitting strategy to Measure | Occasion Metric |

|---|---|---|---|

| Exercise Success | Did the agent full the goal? | Binary finish end result plus prime quality scoring | Exercise completion charge (0-100%) |

| System Utilization Prime quality | Did it identify the acceptable devices with acceptable arguments? | Deterministic validation plus LLM-as-a-Select | System identify accuracy, parameter correctness |

| Reasoning Coherence | Have been intermediate steps logical and justified? | LLM-as-a-Select with chain-of-thought analysis | Reasoning prime quality score (1-5 scale) |

| Worth-Effectivity | What did it worth versus price delivered? | Token monitoring, API monitoring, latency measurement | Worth per worthwhile exercise, time to completion |

Select one or two metrics from each pillar based in your manufacturing constraints and failure modes it is important catch. You don’t must measure all of the items, merely what points to your specific use case.

Three Evaluation Approaches: Automated, Human, and Hybrid

Agent evaluation implementations fall into three lessons based on who or what performs the evaluation.

[=”” products=”v1|267087186977|0″ visible=”description” title_tag=”div” img_ratio=”4×3″]LLM-as-a-Select makes use of a further succesful model to guage your agent’s outputs robotically. GPT-4 or Claude assesses whether or not or not your agent’s responses met prime quality requirements that are troublesome to specify as deterministic tips. The evaluator model receives the agent’s enter, its output, and a grading rubric, then scores the response on dimensions like helpfulness, accuracy, or protection compliance. This handles subjective requirements that resist straightforward validation tips, resembling tone appropriateness or clarification readability, whereas sustaining the rate and consistency of automated testing.

LLM-as-a-Select evaluation works best when you can current clear evaluation requirements throughout the instant and when the evaluator model has satisfactory performance to guage the size you care about. Stay up for evaluator fashions that are too lenient (grade inflation), too strict (false failures), or inconsistent all through comparable cases (unstable scoring).

Human evaluation entails exact people reviewing agent outputs in opposition to prime quality requirements. This catches edge cases and subjective prime quality factors that automated methods miss, significantly for duties requiring space expertise, cultural consciousness, or nuanced judgment. The first challenges are worth, velocity, and scaling. Human evaluation costs $10-50 per exercise counting on complexity and reviewer expertise, takes hours to days barely than seconds, and will’t consistently monitor manufacturing guests. Human evaluation works best for calibration datasets, failure analysis, and periodic prime quality audits barely than regular monitoring.

Hybrid approaches combine automated filtering with human overview of vital cases. Start with LLM-as-a-Select for first-pass evaluation of all agent outputs, then route failures, edge cases, and high-stakes alternatives to human reviewers. This provides automation’s velocity and safety whereas sustaining human oversight the place it points most. The tactic mirrors evaluation strategies utilized in RAG strategies, the place automated metrics catch obvious failures whereas human overview validates retrieval relevance and reply prime quality.

Evaluation methodology choice relies upon upon your specific constraints. Extreme-volume, low-stakes duties favor pure automation. Extreme-stakes alternatives with superior prime quality requirements need human overview. Most manufacturing strategies revenue from hybrid approaches that steadiness automation with centered human oversight.

Agent-Specific Benchmarks and Evaluation Devices

The agent evaluation ecosystem accommodates specialised benchmarks designed to verify capabilities previous straightforward textual content material period. AgentBench provides multi-domain testing all through internet navigation, database querying, and data retrieval duties. WebArena focuses on web-based brokers that ought to navigate web sites, fill varieties, and full multi-page workflows. GAIA checks frequent intelligence by duties requiring multi-step reasoning and kit use. ToolBench evaluates system utilization accuracy all through a whole bunch of real-world API eventualities.

These benchmarks arrange baseline effectivity expectations. Realizing that current best brokers get hold of 45% success on GAIA’s hardest duties helps you calibrate whether or not or not your 35% success charge represents a problem requiring construction changes or simply shows exercise problem. Benchmarks moreover enable direct comparisons when evaluating utterly completely different agent frameworks or base fashions to your use case.

[=”” products=”v1|335785385965|0″ visible=”description” title_tag=”div” img_ratio=”4×3″ =”2,1″]Implementation devices have emerged to make evaluation work in manufacturing strategies. LangSmith provides built-in agent tracing that visualizes system calls, intermediate reasoning steps, and backbone elements, with built-in evaluation pipelines that run robotically on every agent execution. Langfuse presents open-source observability with custom-made evaluation metric assist, letting you define domain-specific graders previous commonplace benchmarks. Frameworks initially constructed for RAG evaluation, like RAGAS, are being extended to assist agent-specific metrics resembling system identify accuracy and multi-step reasoning coherence.

You don’t must assemble evaluation infrastructure from scratch. Choose devices based on whether or not or not you need hosted choices with quick setup (LangSmith), full customization (Langfuse), or integration with present RAG pipelines (RAGAS extensions). The evaluation methodology points higher than the exact system, as long as your chosen platform helps the automated, human, or hybrid methodology your use case requires.

Establishing Your Agent Evaluation Pipeline

Environment friendly evaluation pipelines start with a foundation that many teams skip: the golden dataset. This curated assortment of 20-50 examples displays final inputs and anticipated outputs to your agent. Each occasion specifies the obligation, the correct decision, the devices that should be invoked, and the reasoning that must occur. You need this ground actuality reference to measure in opposition to.

Making a golden dataset requires exact engineering work. Analysis your manufacturing logs to go looking out marketing consultant duties all through problem ranges. For each exercise, manually verify the correct decision and doc why varied approaches would fail. Embrace edge cases that broke your agent all through testing. Change the dataset as you uncover new failure modes. This preparatory work determines all of the items that follows.

[=”” products=”REMPRIME” visible=”description” title_tag=”div” img_ratio=”4×3″]

Define clear success requirements aligned collectively along with your 4 pillars. For exercise success, specify whether or not or not partial completion counts or solely full choice points. For system utilization, resolve if calling redundant devices that don’t hurt the top end result constitutes failure. For reasoning, arrange whether or not or not you care about elegant choices or just acceptable ones. For cost-performance, set arduous limits on acceptable latency and token utilization. Obscure requirements lead to evaluation strategies that catch nothing useful.

Select three to five core metrics that span your 4 pillars. Exercise completion charge covers exercise success. System identify accuracy addresses utilization prime quality. An LLM-as-a-Select reasoning score handles coherence. Frequent tokens per exercise quantifies worth. These 4 metrics current secure safety with out overwhelming your monitoring dashboards.

Implement automated LLM-as-a-Select evaluation that runs on every deployment sooner than manufacturing. This catches obvious regressions like system calls with swapped parameters or reasoning that contradicts the obligation purpose. Automated evaluation ought to dam deployments that fail your core metrics by higher than threshold portions, normally 5-10% drops in success charge or 20-30% will enhance in worth per exercise.

Arrange human overview processes for failures and edge cases. When automated evaluation flags a failure, route it to space consultants who can resolve whether or not or not the evaluation was acceptable or revealed an evaluation bug. When brokers encounter situations not lined in your golden dataset, have folks assess the response prime quality and add validated examples to extend your ground actuality. This iterative enchancment cycle mirrors best practices from RAG strategies, the place regular evaluation and dataset enlargement drive reliability enhancements.

Start straightforward and add sophistication as you understand your failure patterns. Begin with exercise success expenses in your golden dataset. After you reliably measure completion, add system utilization metrics. Then introduce reasoning evaluation. Lastly, optimize cost-performance as quickly as prime quality metrics stabilize. Attempting full evaluation from day one creates complexity that obscures whether or not or not your agent actually works.

[=”” products=”v1|357708433185|0″ visible=”description” title_tag=”div” img_ratio=”4×3″ =”2,1″]Widespread Evaluation Pitfalls and The fitting strategy to Avoid Them

Three failure modes consistently derail agent evaluation efforts. First, teams contemplate on synthetic information that doesn’t replicate manufacturing complexity. Brokers that ace clear verify cases normally fail when precise clients current ambiguous instructions, reference earlier context, or combine quite a lot of requests. Resolve this by seeding your golden dataset with exact manufacturing failures barely than idealized eventualities.

Second, evaluation requirements drift from enterprise targets. Teams optimize for metrics like API identify low cost or reasoning magnificence that don’t map to what actually points: did the shopper’s disadvantage get solved? Usually validate that your evaluation metrics predict real-world success by evaluating agent scores in opposition to specific shopper satisfaction information or enterprise outcomes.

Third, evaluation strategies fail to catch regressions on account of verify safety doesn’t span the agent’s performance home. An agent might excel at information retrieval duties whereas silently breaking on calculation duties, nonetheless restricted verify examples solely practice the retrieval path. Examine design requires examples defending each design pattern your agent implements and each system in its repertoire.

These pitfalls share a normal root: treating evaluation as a one-time setup exercise barely than an ongoing observe. Reliable evaluation requires regular funding in verify case prime quality, metric validation, and safety enlargement as your agent’s capabilities develop.

Conclusion

Reliable agent evaluation requires measuring exercise success, system utilization prime quality, reasoning coherence, and cost-performance trade-offs by a combination of automated LLM-as-a-Select analysis, deterministic validation, and centered human overview. The muse is a golden dataset of 20-50 curated examples that define what success seems wish to your specific use case.

Start with straightforward exercise completion metrics on precise manufacturing eventualities. Add sophistication solely after you understand your failure modes. Choose evaluation devices based on whether or not or not you need hosted consolation or full customization, nonetheless acknowledge that your evaluation methodology points higher than the exact platform.

For implementation particulars on setting up manufacturing evaluation pipelines, check out Anthropic’s “Demystifying evals for AI brokers”, which provides an eight-step technical roadmap with grader schemas, YAML configurations, and precise purchaser case analysis exhibiting how teams like Descript and Bolt developed their evaluation approaches.

Provide hyperlink